Applied Mathematics and Computational Sciences

Taking graphics cards beyond gaming

A highly efficient mathematical solver designed to run on graphics processors gives scientists and engineers a powerful new tool for a common computational problem.

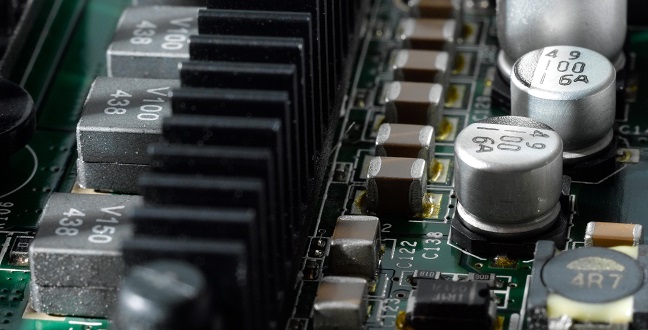

The graphics cards found in gaming computers can now be used to efficiently solve a common class of computationally intensive mathematical problems.

© KAUST Anastasia Khrenova

The graphics cards found in powerful gaming computers are now capable of solving computationally intensive mathematical problems common in science and engineering applications, thanks to a new solver developed by researchers from the KAUST Extreme Computing Research Center1.

“One of the most common problems in scientific and engineering computing is solving systems of multiple simultaneous equations involving thousands to millions of variables,” said David Keyes, KAUST Professor of Applied Mathematics and Computational Science, who also led the research team. “This type of problem comes up in statistics, optimization, electrostatics, chemistry, and mechanics of solid bodies on Earth and gravitational interactions among celestial bodies in space.”

In typical applications, solving such problems is often the main computational cost. Thus, acceleration of the solver has the potential to considerably impact both the execution time and the energy consumption required to solve the problem.

“Graphics processing units (GPUs) are very energy efficient compared with standard high-performance processors because they eliminate a lot of the hardware required for standard processors to execute general-purpose code,” explained Keyes. “However, GPUs are new enough that their supporting software remains immature. With the expertise of Ali Charara, a Ph.D. student in the Center who spent several months as an intern at NVIDIA in California, we have been able to identify many things that we can either innovate or improve upon, such as redesigning a common solver.”

The key to making a more efficient solver is maximizing the trade-off between the number of processors and the memory available to temporarily store the computational data. Memory remains expensive, so finding a way to execute more computation using less memory is critical to solving the problem of computational cost.

“Charara designed a solver scheme that operates directly on data ‘in place’ without making an extra copy,” explained Dr. Hatem Ltaief, a Senior Research Scientist from the project’s team. “This means a system twice as large can be stored in the same amount of memory.”

Usually, operations on simultaneous equations are carried out by progressing sequentially over columns in the data of the matrix of values derived from the equations. Charara converted the columns into a series of tasks on small, computationally efficient, rectangular and triangular blocks recursively carved out of the matrix. This redesigned triangular matrix-matrix multiplication implementation achieves up to eightfold acceleration compared to the speed of existing implementations.

“Now, every user of an NVIDIA GPU has a faster solver for a common task in scientific and engineering computing at their disposal,” said Keyes.

The solver is due to be integrated into the next scientific software library for NVIDIA GPUs.

References

-

Charara, A., Ltaief, H. & Keyes, D. Redesigning triangular dense matrix computations on GPUs. In: Dutot PF., Trystram D. (eds) Euro-Par 2016: Parallel Processing. Euro-Par 2016. Lecture Notes in Computer Science, vol 9833. Springer, Cham (2016).| article

You might also like

Applied Mathematics and Computational Sciences

Resilient renewable energy networks designed for the desert

Applied Mathematics and Computational Sciences

Smarter MRI image analysis for the whole heart

Applied Mathematics and Computational Sciences

Realistic scenario planning for solar power

Applied Mathematics and Computational Sciences

Bringing an old proof to modern problems

Applied Mathematics and Computational Sciences

Accounting for extreme weather to boost energy system reliability

Applied Mathematics and Computational Sciences

Past and future drought patterns across the Arabian Peninsula

Applied Mathematics and Computational Sciences

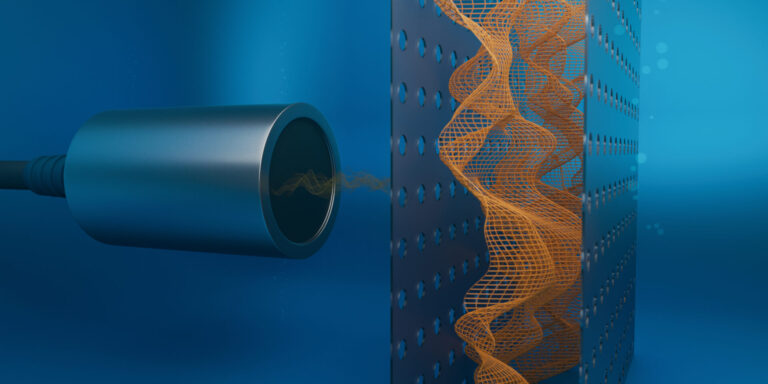

New pattern for underwater resonators

Applied Mathematics and Computational Sciences